Digital twins on the manufacturing menu – with help from the hyperscalers

SOURCE: HTTPS://WWW.IOTTECHNEWS.COM/

NOV 16, 2023

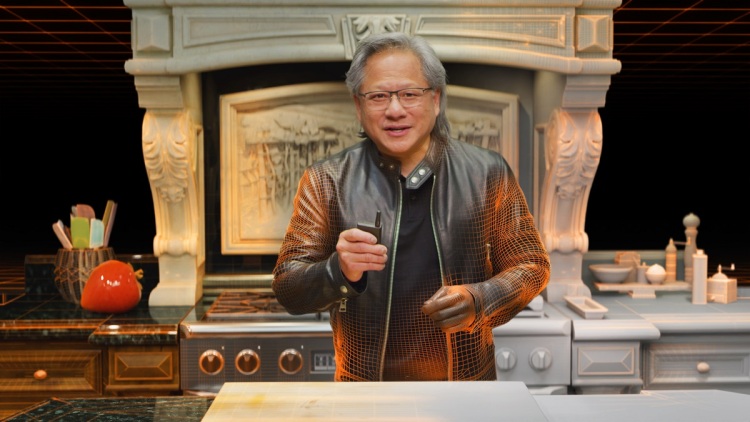

Jensen Huang interview: Nvidia’s post-Arm strategy, Omniverse, and self-driving cars

SOURCE: VENTUREBEAT.COM

FEB 20, 2022

Nvidia CEO Jensen Huang didn’t get to fulfill his dream of acquiring Arm for $80 billion. Regulators held the deal up and Huang called off the deal after “giving it our best shot.”

But his company is still going strong. Nvidia reported revenues of $7.64 billion for its fourth fiscal quarter ended January 30, up 53% from a year earlier. Gaming, datacenter, and professional visualization market platforms each achieved record revenue for the quarter and year.

That shows the company has a lot of breadth as it figures out what it will do in the wake of the Arm deals collapse. And Huang said he has high hopes for his three-chip strategy, the Omniverse, the metaverse, and self-driving cars. I had a short time to interview Huang after the company reporting earnings this week.

Here’s an edited transcript of our interview.

VentureBeat: What is your post-Arm strategy? Do you have to communicate your strategic direction in light of [the Arm deal being called off]?

Jensen Huang: Not really anything. Because we never finished combining with Arm. So any strategies that would have come from the combination were never talked about. And so our strategy is exactly the same. We do accelerated computing for wherever there are (central processing units) CPUs. And so we’ll do that for x86. And we’ll do we do that for Arm. We have a whole bunch of ARM CPUs, and system-on-chips (SoCs) in development. And we’re enthusiasts. We do all that. We have a 20-year license to Arm’s intellectual property. And we’ll continue to take advantage of all that and all the markets. And that’s about it. Keep building CPUs, (graphics processing units) GPUs, and DPUs (data processing units).

VB: So it’s your three-chip strategy? Would you consider RISC-V now that the Arm deal is not happening?

Huang: We use RISC-V. We’re RISC-V users inside our GPUs. We use it in several areas. For system controllers, inside the Bluefield GPU, there is a RISC-V acceleration engine, if you will, a programmable engine. And we use RISC-V when when it makes sense. We use Arm when it makes sense. We use x86 when it makes sense.

VB: And how are you viewing progress on the metaverse? It seems like everybody’s a lot more excited about the metaverse, and the Omniverse is always part of that conversation.

Huang: Let’s see. Omniverse for enterprise is being trialed and being tested in about 700 or so different companies around the world. We have entered into some major licenses already. And so our numbers are off to a great start. People are using it for all kinds of things. They’re using it for connecting designers and creators. They’re using it to simulate logistics warehouses, simulate factories. They’re using it for synthetic data generation because we simulate sensors physically and accurately. You could use it for simulating data for training AIs that are collected from LiDAR, radars, and of course cameras. And so they’re using it for simulation part of the software development process. You need to validate software as part of the part of the release process. And you can put Omniverse in the flow of robotics application validation. People are using it for digital twins, too.

A scene from an Ericsson Omniverse environment.

VB: You’re going to make the biggest digital twin of all, right? [Nvidia plans to make a digital twin of the Earth as part of its Earth 2 simulation, which will use AI and the world’s fastest supercomputers to simulate climate change and predict how the planet’s climate could change over decades].

Huang: We’re off building or scoping out the architecture and building the ultimate digital twin.

VB: And do you feel like we’re also heading towards an open metaverse? Will it be sufficiently open as opposed to a bunch of walled gardens?

Huang: I really do hope it’s open and I think it will be open with Universal Scene Description (USD). As you know, we’re one of the largest contributors, the primary contributor, to USD today. And it was invented by Pixar. It’s been adopted by so many different content developers. Adobe adopted it, and we have gotten a lot of people to adopt it. I’m hoping that everybody will go to USD and then it will be become essentially the HTML of the metaverse.

VB: What is your confidence level in the automotive division and how we are moving forward with self-driving cars? Do you feel like we are getting back on track after the pandemic?

Huang: I am certain that we will have autonomous vehicles all over the world. They all have their operating domains. And some of that is just within the boundaries of a very large warehouse. They call them AMRs, autonomous moving robots. You could have them inside walled factories, and so they could be moving goods and inventory around. You could be delivering goods across the last mile, like Neuro and others. All these great companies that are doing last-mile delivery. All of those applications are very doable, so long as you don’t over promise.

And I think there will be thousands an thousands of applications of autonomous vehicles, and it’s a sure thing. This year is going to be the inflection year for us on autonomous driving. This will be a big year for us. And then next year, it’ll be even bigger next year. And in 2025, that’s when we deploy our own for software where we do revenue sharing with the car companies.

And so if the license was $10,000, we shared 50-50. If it’s a subscription base of $1,000 or $100 a month, we share 50-50. I think I’m pretty certain now that autonomous vehicles will be one of our largest businesses.

LATEST NEWS

WHAT'S TRENDING

Data Science

5 Imaginative Data Science Projects That Can Make Your Portfolio Stand Out

OCT 05, 2022

SOURCE: HTTPS://WWW.IOTTECHNEWS.COM/

NOV 16, 2023

SOURCE: HTTPS://AITHORITY.COM/

OCT 03, 2023

SOURCE: HTTPS://WWW.SCIENCEDAILY.COM/

AUG 08, 2023

SOURCE: HTTPS://WWW.GLOBALLOGIC.COM

JUL 06, 2023

SOURCE: HTTPS://INDIAAI.GOV.IN/ARTICLE/HOW-DIGITAL-TWIN-TECHNOLOGY-WILL-EVOLVE-IN-2023

JUL 04, 2023

SOURCE: HTTPS://WWW.CNBC.COM/2023/01/21/DIGITAL-TWINS-ARE-SET-FOR-RAPID-ADOPTION-IN-2023.HTML

JUN 30, 2023